We’ve been working on an autocomplete/location picker for use across government. I blogged last year about what an autocomplete is and why we are building one.

While we were building it, we had our autocomplete/location picker tested for accessibility. We had completed a fair amount of in-house testing with a range of assistive technologies. We thought working with a third party to test it would be a great way of checking that we were on the right track.

It’s good we did. We found a couple of major issues and the team as a whole learned a lot from the experience. Here’s what we did and what we found out.

Getting the testing done

We had our autocomplete tested by the Digital Accessibility Centre (DAC). They are one of several companies who specialise in accessibility testing and auditing. The majority of DAC’s testers have access needs.They are expert users but they depend on services being accessible in their day-to-day lives.

DAC invited us to their offices in Neath to watch them put the autocomplete through its paces.

How the day went

On arrival, we were shown around and introduced to the testers. We sat down with each tester in turn. They told us a bit about their access needs, the assistive technology they tested with and required, and what they’d be looking for in testing.

Next, they opened our prototype page and had a go at searching for a country.

Here are some of the things our testers found.

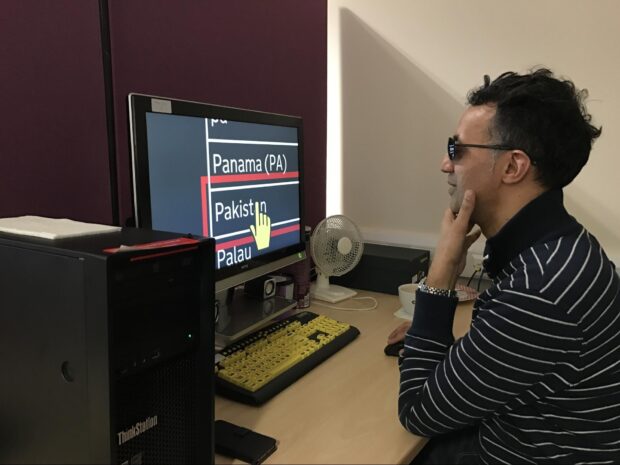

Ziad – 5% vision, extreme blurred vision due to glaucoma

Ziad tests screen magnification with Zoomtext that magnifies his screen by 12 times. He inverts his colours to reduce strain on his eyes. He also tests with iPhones, but uses the VoiceOver screen reader as the screen is too small to see.

For the most part, the picker worked well for Ziad but we found two potential issues.

Some larger country names may take over two lines. It wasn’t always clear to him that this had happened – the words just looked like separate suggestions that didn’t make much sense.

For some searches, the tool suggested things he wasn’t expecting. For example, a search for ‘in’ brings up Papua New Guinea. This is because the picker uses the official name (The Independent State of Papua New Guinea) and picks out the ‘in’ from ‘Independent’. This isn’t specifically an accessibility issue but it is a potential usability one.

Becs – mobility impaired

Becs uses Dragon NaturallySpeaking, which converts her speech into text and allows her to control her computer with speech commands.

Becs found a major bug. When she dictated ‘France’, the text would appear as normal. When she tried to continue, the field would go blank.

Later we found out this was because Dragon uses a special way to input the text, and autocomplete wasn’t expecting this.

When presented with a list of results, we were interested in what Dragon users would do to try to select one. Would they say ‘choose France’ or do something else?

In Becs’s case, she said ‘press down’ to select it. This seems sensible, but we wonder if users would still do this if the option they wanted was further down the list.

Mike – blind

Mike lost his sight fully 10 years ago. He tested our autocomplete with JAWS screen reader, NVDA screen reader and VoiceOver on iOS.

Mike found one large bug in JAWS and NVDA, and a few smaller ones. This really surprised us as we’d tested extensively with them. Later we found that we’d accidentally introduced the issue the day before while making some last-minute tweaks. We learned a lesson there.

We had a good discussion about how best to communicate what type of component the autocomplete is. Letting the user know what it is helps them understand how they should expect to use it.

Instructions can depend on the assistive technology being used. For example, people might use: ‘edit field’ ‘edit, combobox’ or ‘combobox’. Mike suggested ‘search filter’ as an option. It’s a bit like search, so we’re going to explore this.

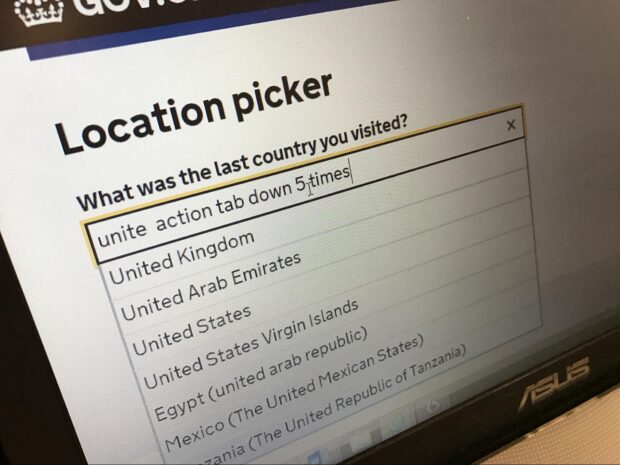

Rob – severe physical disability

Rob has a condition similar to multiple sclerosis that affects his fine motor control and ability to perform tasks. He can type a few letters then uses copy and paste.

Like Becs, he also tests with Dragon, but uses a different version. As expected, Rob found the same issue as Becs: he couldn’t dictate a country.

We wanted to test how Rob would try to pick a result further down the list. For a country picker, the thing you want is likely to be near the top. For something like an autocomplete of schools, the correct result may be much lower as so many schools share similar names.

We gave Rob the challenge of picking ‘Egypt’ – the fifth result for a list. He tried ‘click Egypt’ but this only typed the words ‘click Egypt’ in the input box. He next tried ‘tab down 5 times’, but this also typed in the box. This is more an issue with the way Dragon works than with our picker.

A Dragon user trying ‘click Egypt’ is something we’d like to support. They are clickable options but Dragon doesn’t recognise them as such. We’re going to do some investigation to see if there’s a solution to making Dragon recognise them.

Our conclusions from the day

Getting a third party to test our autocomplete gave us the confidence to know we were going in the right direction. It also found some tricky bugs that we’re going to work on. It was an amazing learning experience for the team.

Watching testing as it happened helped us to see minor issues that aren’t fails, but that we could improve upon.

There are things we could do that may improve the experience for some users, but at the expense of others. We’ll need to balance these needs to make sure no one has a barrier to using the autocomplete, while making it work really well for the majority.

What we did next

We fixed the issues identified by DAC, and then ran our own usability sessions with users with access needs. These helped confirm that the autocomplete worked well for them, which confirmed why accessibility testing with real users is so important.

Teams can find the latest version of the autocomplete here.

There’s also a specific version for picking countries and territories.

And another government team has extended it to search for government departments and agencies.

Our thanks to DAC and to the people working there.

Follow Ed on Twitter and don't forget to sign up for email alerts.

2 comments

Comment by Renie Nicholls posted on

Have you done anything similar with 'senior citizens'? Many are not senile and are quite computer literate but they still express difficulties manoeuvering around sites such as gov.uk.

Comment by Ed Horsford posted on

Hi Renie,

This piece of accessibility work did not specifically target seniors, but seniors are often included in our other usability testing sessions.

One of the reasons we started work on the autocomplete was because of our prior research on drop-downs and finding that they present significant barriers to those less familiar with computers. We've seen that the autocompletes work very well in these cases.